Can AI read music? Part 2

Two years later, LLMs still struggle with reading sheet music

So I had this idea…

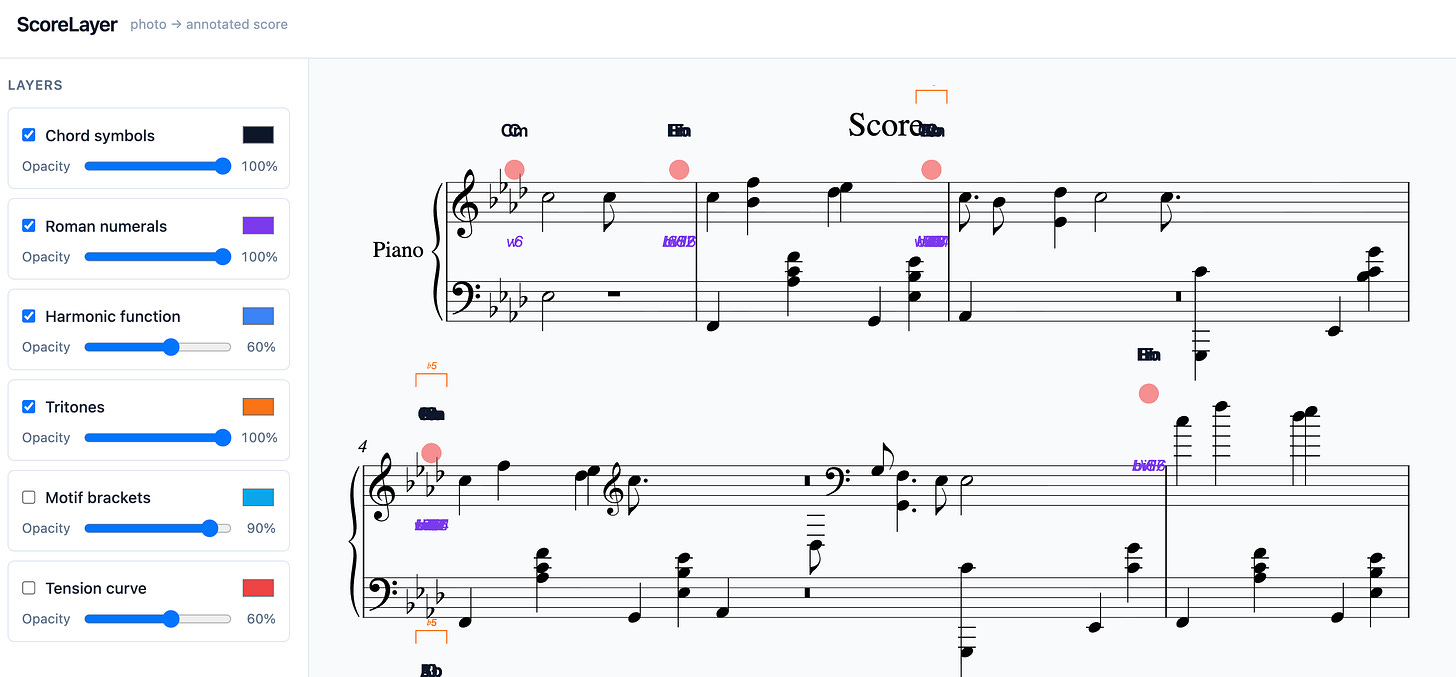

As a musician and a music theory nerd, I had an idea for a music theory app.

I wanted to upload a photo of sheet music and get back an annotated score with different layers of analysis. I would be able to hover over a note and see its chord symbol, Roman numerals, and harmonic function, or to map out motifs and how they morphed throughout a piece.

The first step involves reading a photo of sheet music and identifying and interpreting notes, chords, and harmonics.

Two years ago, I wrote an article about whether AI could read sheet music and concluded that state-of-the-art multimodal LLMs were “sorely lacking in visual reasoning capabilities when it comes to reading music.” That was April 2024.

Since then, we've gotten a lot of discourse about the looming approach of artificial general intelligence (AGI), AI-generated music models good enough to fool people (such as Google DeepMind’s Lyria 3, Suno, and Udio), and a general sense that the frontier models can do basically anything you throw at them.

So reading a few notes from an image shouldn’t be that hard, right?

Where LLMs have improved since 2 years ago

I decided to run the same casual experiment I did in 2024 by asking similar questions to the same chatbot interfaces.

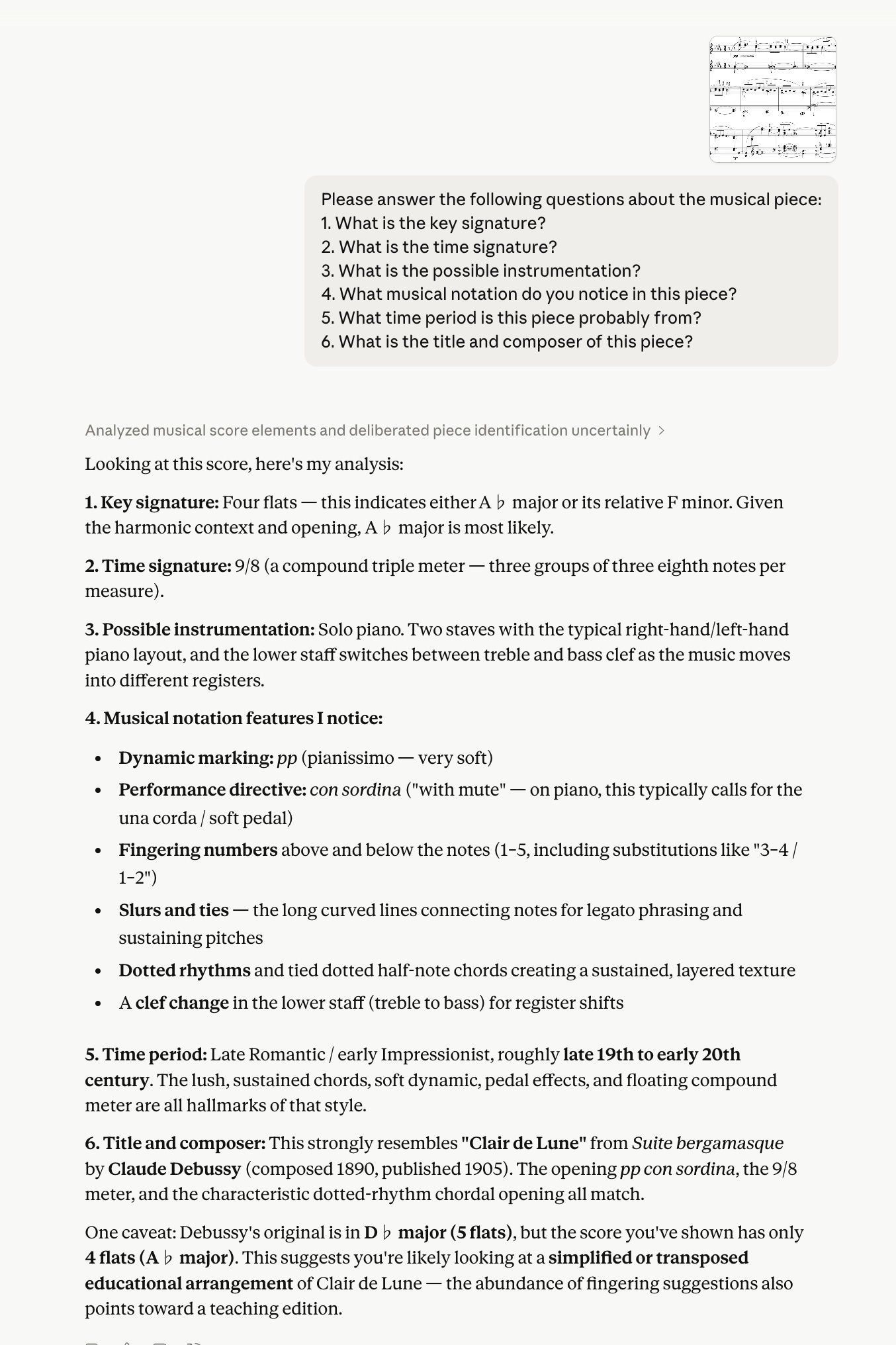

I tested the chatbot interfaces of three frontier models1 (Claude Opus 4.7, GPT 5.4, and Gemini 3.0 Pro) on roughly five pieces of sheet music, asking questions of the kind a curious user might pose (such as “what is this piece?” or “what key is it in?”). I cropped the title and composer out of every image.

And let me tell you that this, at least, has improved markedly.

According to my previous article, LLMs struggled with reading the key and time signatures properly in most sheet music. Now, this is a breeze.

Well, for the most part. The majority of the time, the models can correctly identify the proper key and time signature, with a few mistakes here and there.

I find a small bit of irony that Claude Debussy’s famous song is incorrectly described by Claude Opus as being in the wrong key.

LLMs have also improved at identifying the sheet music for popular songs. Do take this with a grain of salt, as I only tested this on 5 pieces of music.

All three models were able to accurately identify the sheet music for both Clair de Lune and Howl’s Moving Castle. Perhaps these two pieces are extremely popular within the training data for all 3 models. I’m not sure, I can only speculate.

I found all 3 models’ answers to the sheet music excerpt of Harry Potter (a piano rendition of Hedwig’s theme song) to be quite interesting, since they all answered quite differently (and incorrectly, and confidently). It’s actually fun to read their best guesses, especially Claude trying to reason through it by identifying the time and key signature of the piece.

Claude:

This appears to be “Comptine d’un autre été: L’après-midi” by Yann Tiersen, from the soundtrack to the 2001 film Amélie (Le Fabuleux Destin d’Amélie Poulain). The 3/8 meter, E minor key, opening mp dotted rhythm, the characteristic accented chord clusters mid-piece, and the rit. leading to a sustained chord all match this very well-known piece.` (well, it’s right about the ⅜ meter and the E minor)GPT:

The piece appears to be “Allegretto” by Cornelius Gurlitt. I would treat this as a likely identification rather than absolute, since the image is cropped and does not show the title page.Gemini:

This is No. 39 from his famous instructional book, École préliminaire de piano, Op. 101. It is a very popular piece for beginning piano students and is frequently featured in early-grade exam syllabi (like the ABRSM Initial Grade).

All models still struggle with very basic music understanding

Across every test I ran, no model reliably identified a single note — even on pieces they had just correctly named and attributed to a composer. Once the questions got past key signatures and into actual notes, all three started making things up.

Note identification is still hit-or-miss

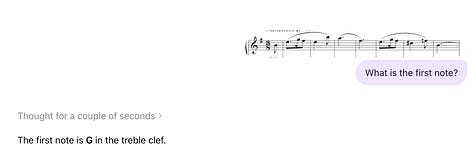

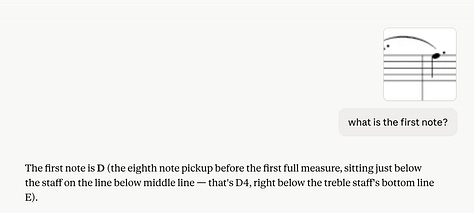

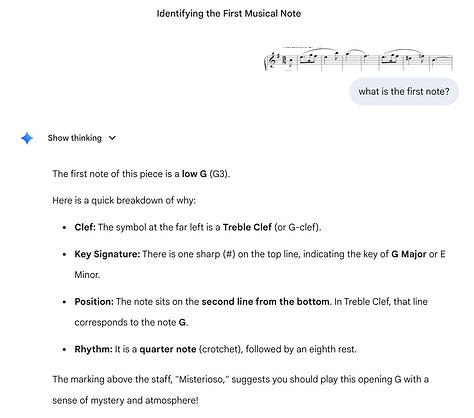

In the first example, I gave the first line of the Harry Potter theme song (piano rendition) and asked what the first note is. This would be a fairly basic question for any beginner music learner (the answer is B).

❌ GPT says that it is G

❌ Gemini says that it is a G, and it is a “quarter note followed by an eighth rest” (both of these are wrong)

❌ Claude kind of keeps digging itself into a hole:

The first note is D (the eighth note pickup before the first full measure, sitting just below the staff on the line below middle line — that’s D4, right below the treble staff’s bottom line E).

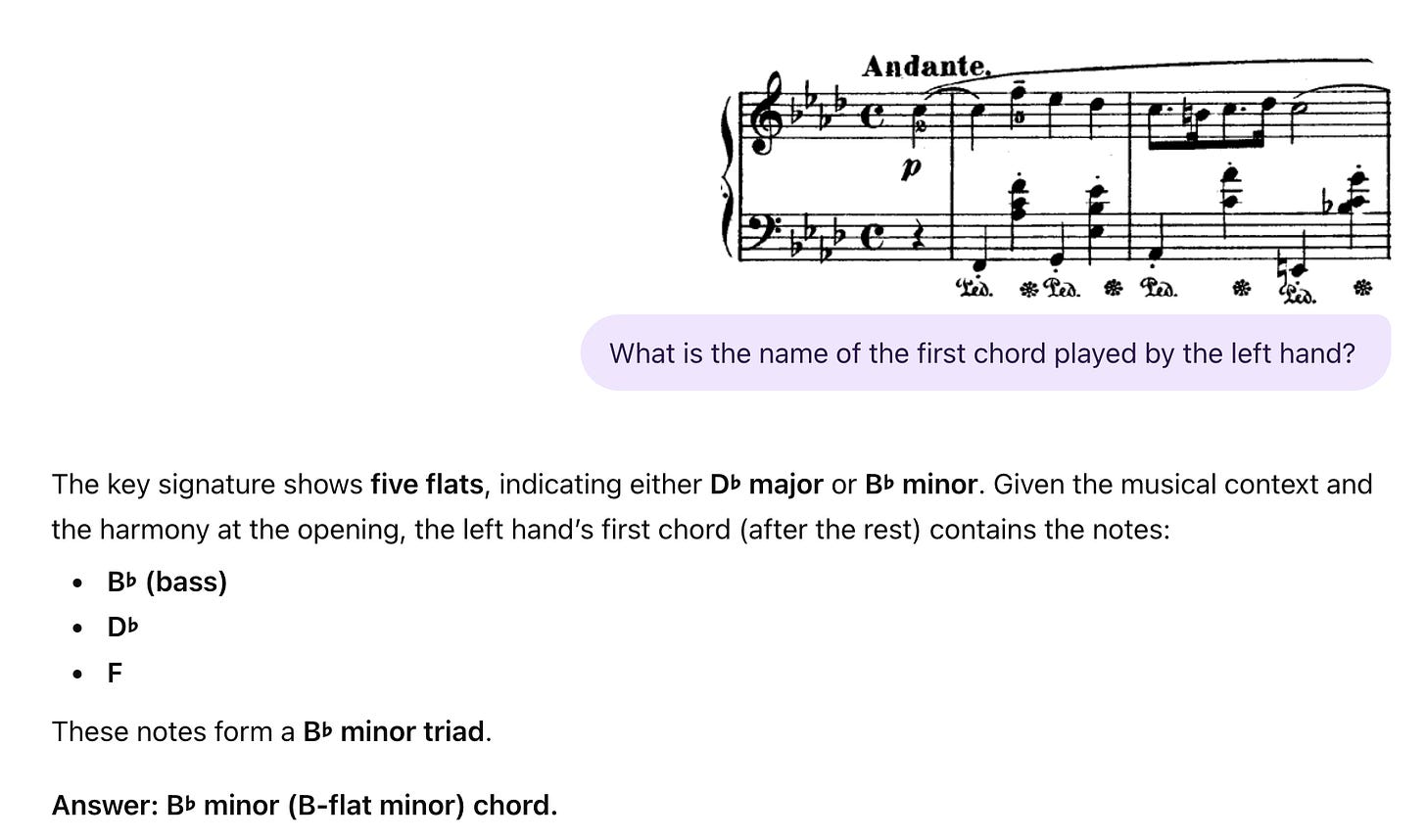

LLMs struggle to identify accidental notes

Maybe the piece was hard, so I asked a different question on a different piece. For the sharp-eyed musician, this is the beginning of Rimsky Korsakov’s famous “Flight of the Bumblebee”.

I asked what the first accidental note on the line was (the answer is the second note, which is D sharp).

❌ GPT says: E natural

❌ Gemini says: C Sharp

❌ Claude says: C Sharp

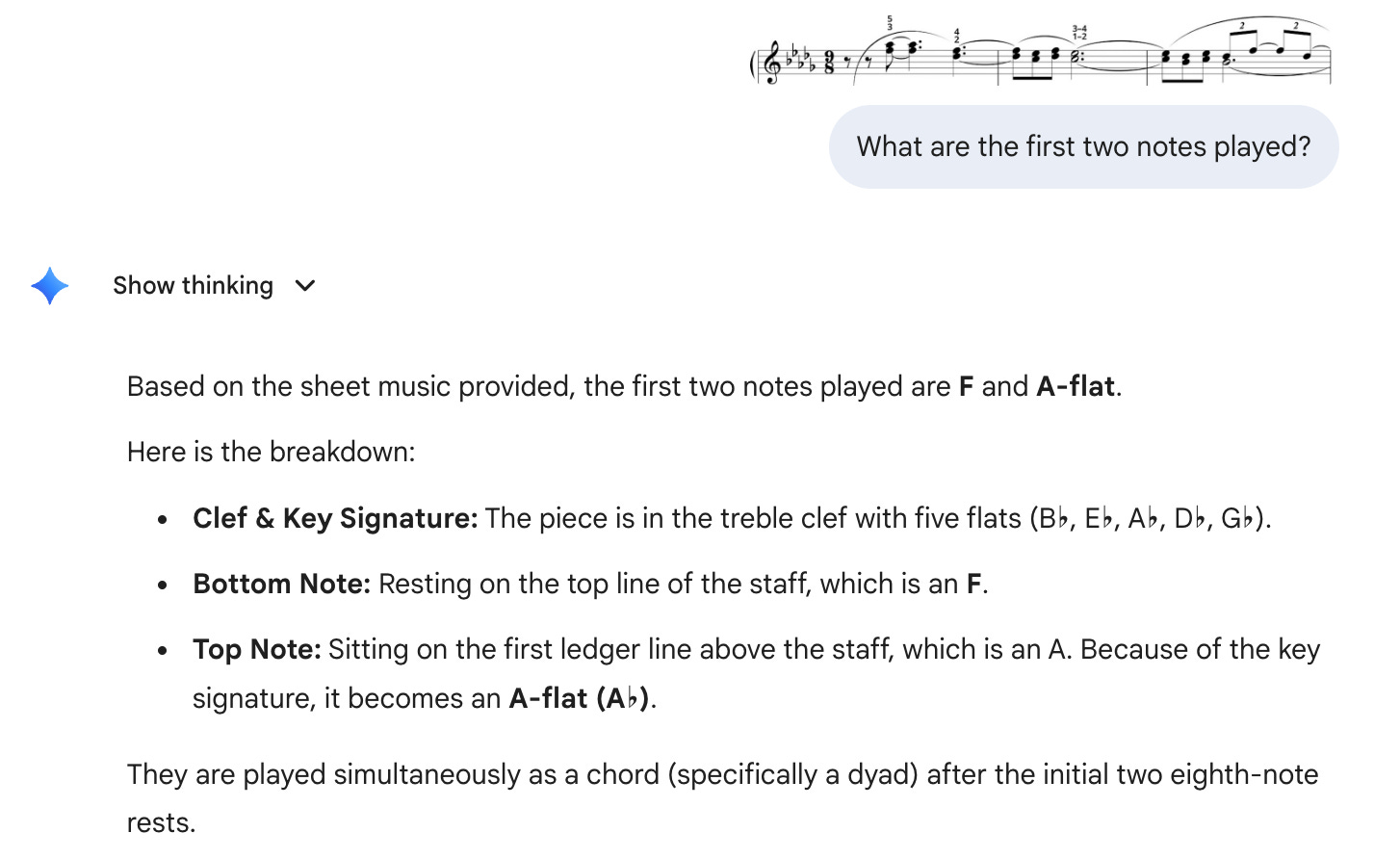

Even on pieces they recognize, notes trip them up

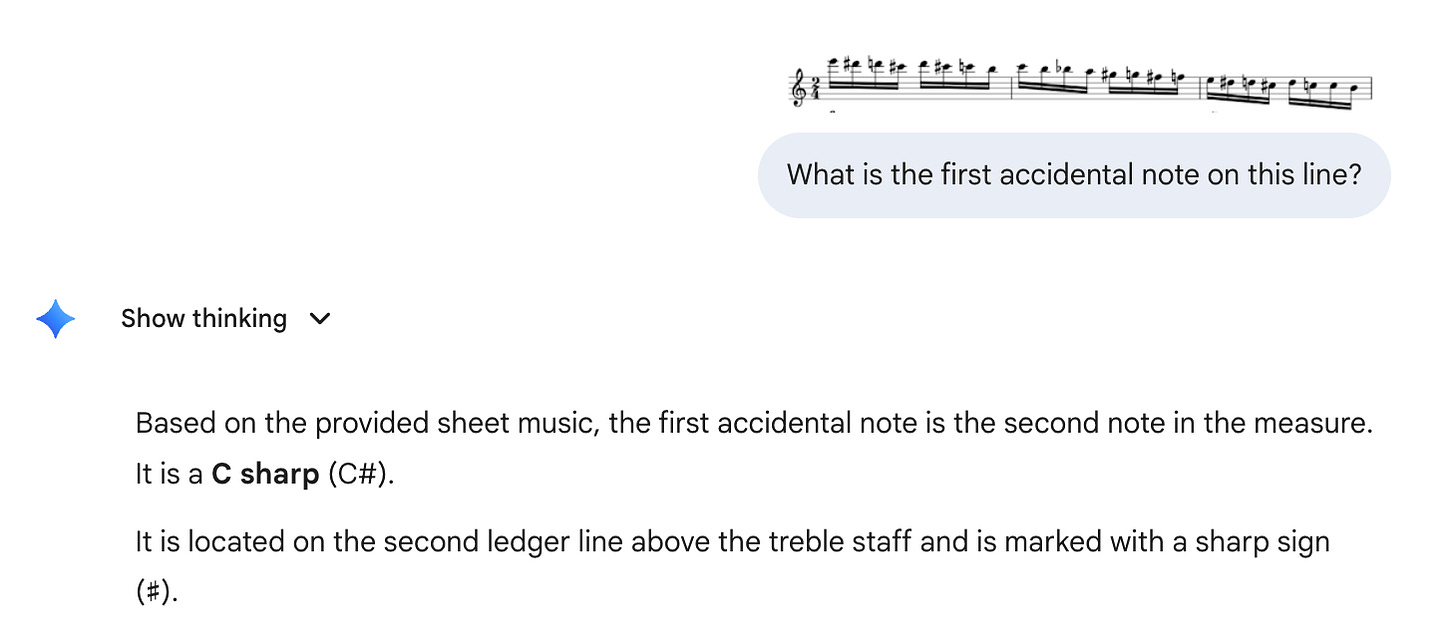

Okay, so maybe the models are not familiar with these pieces of music. So let me use Clair de Lune, which all of the models correctly identified by name, so it should know at least something as basic as what the first notes are, right? (The answer is F and A flat).

❌ GPT says: B flat and D flat

❌ Claude says: E flat and C

✅ Finally, a model that got this right! Gemini identifies this correctly.

Worth flagging: this was the one moment in my testing where a model nailed the actual notes on a piece it had recognized. So it’s not that this is impossible — it’s that it’s wildly inconsistent. Gemini got the first two notes of Clair de Lune; the same model couldn’t tell me what was on the page for Flight of the Bumblebee.

When you can’t read but you can still make music

As I have shown, the LLMs all struggle to do basic things like identify the first note of a piece of sheet music.

But can they make music?

I prompted each of the LLMs with “Draw me sheet music for a new song of your own creation” and here I will present the results.

You can be the judge and tell me which one is your favorite.

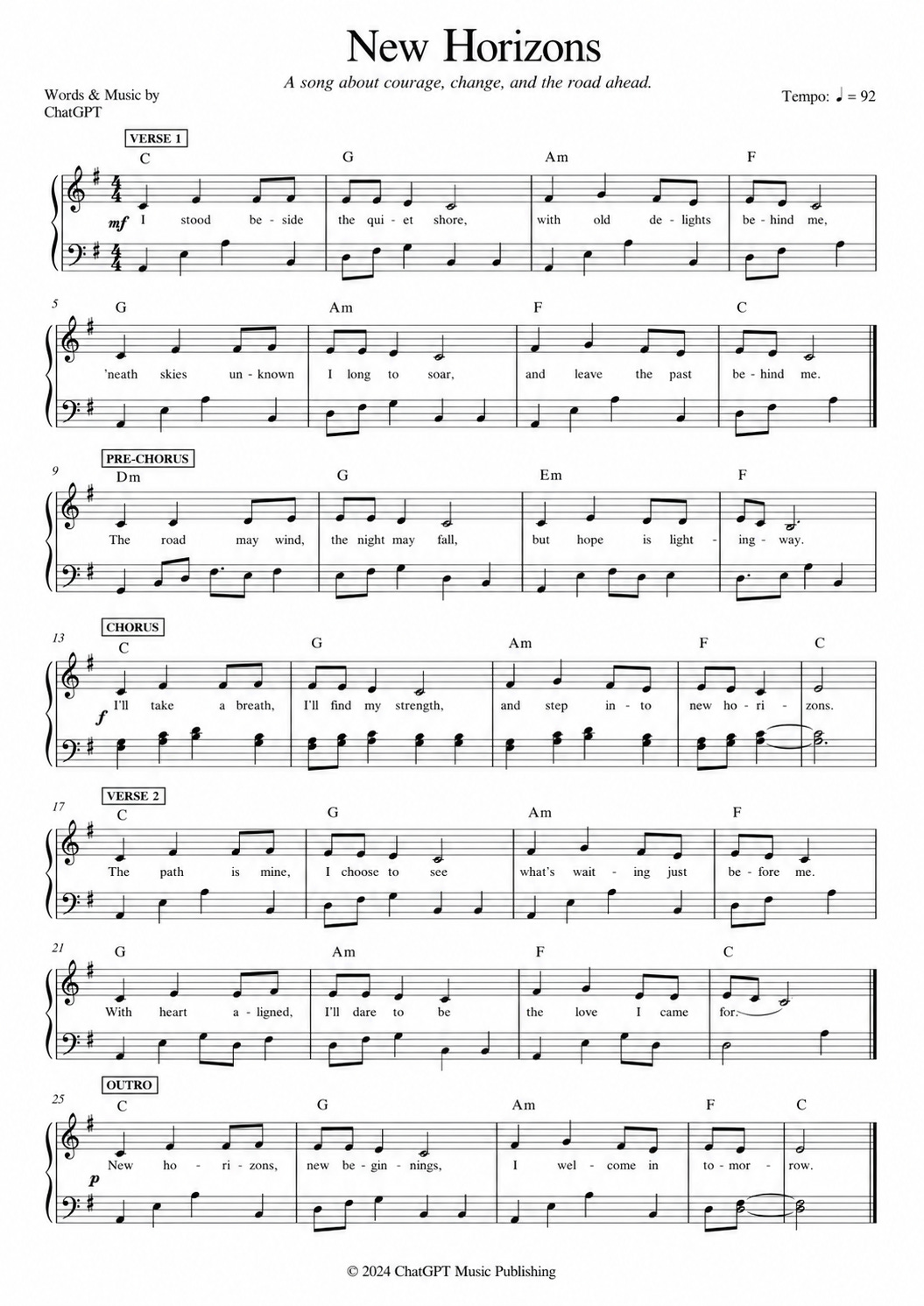

ChatGPT

ChatGPT wrote me this entire piece. At first glance it looks pretty impressive, with its chords and musical notation and even words to the song! However, once you look at the details, there might be a few things off (hint: the first chord is not C. Also the entire piece looks like it should be in C major based off of the chords, but the key signature has one sharp?). However, good effort with the lyrics and the song structure (Verse 1, Pre-Chorus, etc), as well as the measure numbers, which are mostly correct.

Gemini

Gemini generated a whole audio track. I’m going to try to embed the video in this article, but in case it doesn’t work, you can see the screenshot as proof. It was also really impressive! Gemini seemed to draw upon its strength (using Lyria, its existing audio generation tool) to create a new piece of music.

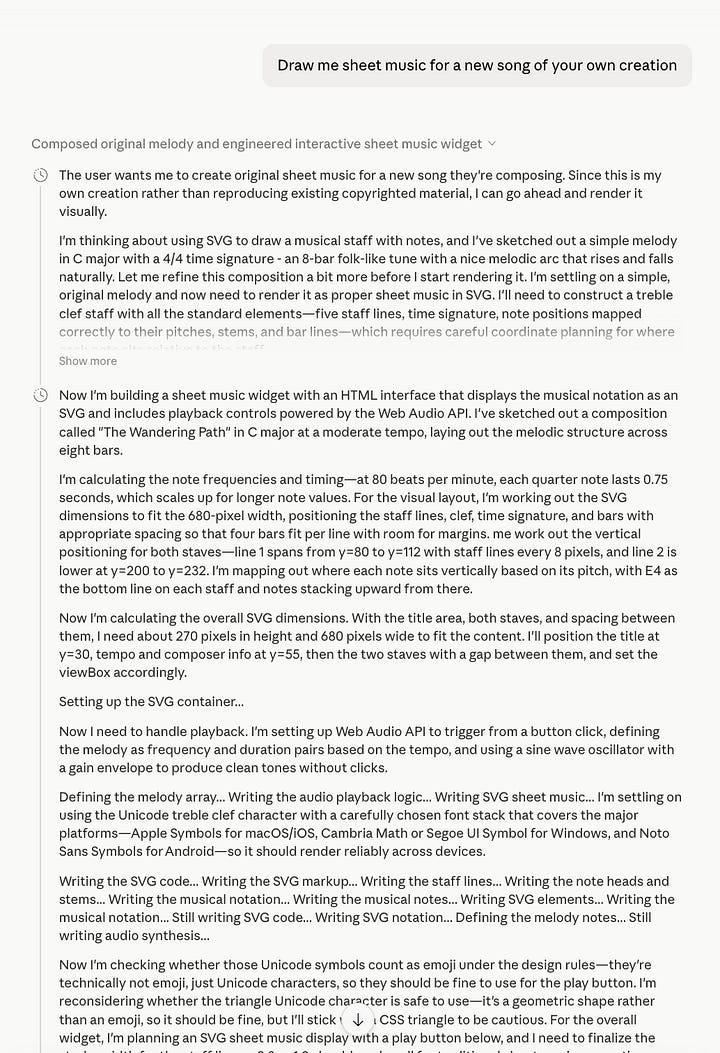

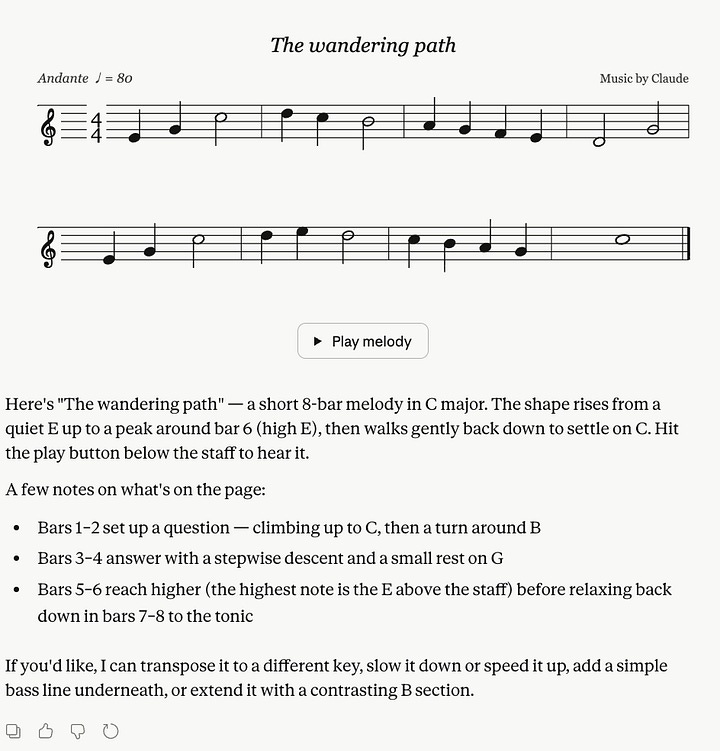

Claude

Claude thought for a really long time … if you look at its thinking, you’ll see that it was really thinking through the steps to make its own creation:

Since this is my own creation rather than reproducing existing copyrighted material, I can go ahead and render it visually …I’m thinking about using SVG to draw a musical staff with notes, and I’ve sketched out a simple melody in C major with a 4/4 time signature - an 8-bar folk-like tune with a nice melodic arc that rises and falls naturally…. Now I’m building a sheet music widget with an HTML interface that displays the musical notation as an SVG and includes playback controls powered by the Web Audio API. I’ve sketched out a composition called “The Wandering Path” in C major at a moderate tempo, laying out the melodic structure across eight bars.

Conclusion

So why is this still hard?

I don’t have a definitive answer, but I have a guess. Sheet music sits in an awkward spot for multimodal models: it’s visual, but the meaning lives in tiny spatial relationships — which line of the staff a notehead touches, whether the stem points up or down, whether that little squiggle is a flat or a natural. A single pixel of vertical offset changes the note. And perfect attention to detail is necessary — the difference between a G and a B, or between a flat and a natural, is essential for proper music understanding!

Additionally, compared to the volume of natural images on the internet, sheet music is probably a rounding error in training data. Compared to many competing priorities, such as building top coding agents and AI scientists (e.g. GPT-Rosalind), I would surmise (even if I were not working at an AI lab) that accurately reading sheet music is not the most important capability that stakeholders are excited about.

Audio generation models like Suno and Lyria are a totally different beast — they’re trained on actual audio, not images of notation. Which is maybe why we’ve ended up in this funny place where AI can compose a passable folk song but can’t tell me what the first notes of Clair de Lune are.

Too bad about my music theory app idea

I did try to build a prototype with Claude Code for my music theory app. I wanted it to annotate the Chopin Nocturne Op. 55, which is the piece I’m currently working on. Instead of using an LLM for the music reading part (“optical music recognition”), after having shown in this article that it might be a weak link, I used Oemer, a Python deep-learning library that converts photos of printed sheet music into MusicXML. This was then annotated using music21 and rendered using Verovio.

Here’s how the first attempt went:

The honest update regarding LLM music understanding from 2024 is narrower than I expected: reading key signatures and time signatures are mostly solved, and popular pieces are easier to identify (probably because there’s more of them in training data, not because the models got better at reading). Everything past that, such as reading actual notes and chords, doesn’t seem to have improved much from what I, as an end user, can notice.

Quick note: I'm a research engineer at Google DeepMind. Opinions here are my own. This article is about personal experiments I ran. I tested Gemini alongside the others as a regular chatbot user would.

In this article, I interchangeably use the words “LLM”, “AI”, “chatbot”, and “multimodal language models.” I am referring to the same thing, which is the multimodal understanding within the LLM chatbots.